Wired

The Pull of PNM: More Proactive, Less Maintenance Please

Key Points

- CableLabs and SCTE's proactive network maintenance working groups bring together operators and vendors to collaboratively develop tools and best practices that make network maintenance more proactive and efficient.

- These working groups offer opportunities for operators and vendors to shape the future of broadband network operations alongside other industry experts.

Proactive network maintenance (PNM) continues to make inroads on its goals of reducing troubleshooting time and cost while reducing the impact of network impairments on customer service. The broadband industry achieves this through grassroots efforts developed and shared by participants in two working groups. This global ecosystem of vendors and operators share their ideas, efforts and challenges to help move the community further on the path to efficient operations and improved services.

In a recent face-to-face meeting, we continued to make progress on several of the working groups’ workstreams, sprint toward some near term goals and set some long-term goals. And there is more to come!

Working Groups

CableLabs’ PNM Working Group (PNM WG) meets bi-weekly for all current work, with a focus on some specific workstreams on the off-weeks. SCTE's Network Operations Subcommittee Working Group 7 (NOS WG7) also focuses on proactive network maintenance and related workstreams. The CableLabs group focuses on developing the engineering and science to enable better PNM while NOS WG7 focuses on the field implementation of that engineering and science.

In addition, the chairs of these working groups meet often to manage the workstreams and ensure progress on key developments that benefit the industry most. Many members overlap between the two groups, which ensures an effective pipeline of research, development and implementation.

Workstreams

The proactive network maintenance working groups maintain progress on several developing workstreams, and a few of note have recently concluded.

Our Methodology for Intelligent Network Discovery (MIND) effort is an important workstream where we target repair efficiency through topology discovery and automation. Participants share developments and ideas on Thursdays, then bring the best results to Tuesday meetings, where we contribute canonical methods and software code for reference. This high cadence and open contribution are leading to well-developed ways to utilize channel estimation data for identifying features in the radio frequency (RF) network that aid in determining the ordinality and cardinality of network components. We accomplish this through an initial process of data cleanup to reveal the features in the signal, then through clustering and pattern matching methods we identify the features in the network and reveal the network's topology. As with all research, the initial process demonstrates functionality, which we have done; now we are working to make it reliable for all the diverse network designs and modify it for various PNM use cases.

The PNM WG has been working hard to develop GAI for Network Operations (NetOps) — or, more simply, AIOps. This group develops and shares the software and tooling to enable the output from NOS WG7 and PNM WG to be automated into network operations through retrieval-augmented generation (RAG) models and agentic AI solutions. In parallel, industry experts are peer reviewing the output from these GAI tools to ensure quality and engineering precision can be reliably delivered, improving on our own peer review process as we go.

Meanwhile, the PNM WG is developing standard ways to quantify impairments in the network as they are revealed in PNM data, referred to as the measurements work, to enable GAI applications and streamline PNM automation.

The PNM WG looks forward to publishing an update to our "Galactic Guide" at the beginning of 2026. The current version is available to the public. In the new version, you can look forward to receiving updates on the development of the measurements work and MIND work, in addition to initial methods to use Upstream Data Analysis (UDA), for those cable modems that can reveal the upstream spectrum.

New workstreams start when the groups complete earlier workstreams and queue up the next priority effort. The initial work on UDA was completed early this year, and more work on it is planned for the future. Likewise, NOS WG7 has recently completed outlining some new PNM training to come from SCTE, which updates the training to utilize new learning methods, and includes our newest knowledge about maintenance efficiency and proactivity.

PNM Face-to-Face

About once a year, CableLabs hosts a face-to-face meeting for these PNM groups and their members. We hosted a hybrid event in October with more than 30 participants representing many operators and vendors at our Colorado headquarters and through virtual attendance. Our time during the face-to-face focused on providing updates about our workstream progress, discussing potential future workstreams and developing content for the upcoming Galactic Guide update.

Near-Term Goals

The working groups are hyper-focused on a few key near-term results:

- Completion of the initial use case for channel estimation data to identify network features and determine the network topology. This is the MIND work.

- Unification on impairment quantification — and, specifically, the ability to monitor resiliency in DOCSIS® networks to transform PNM into managing capacity. Our measurements work will help operators better assess urgency with proactive repairs to prioritize work based on risk of customer impact.

- Identifying new UDA opportunities as new methods are published and further validated.

- Completing updates to the Galactic Guide, including incorporating new, more precise treatments of several important RF concepts published in our technical reports (such as several monographs published recently by SCTE’s NOS WG1).

Long-Term Goals

Accomplishments on our near-term goals open up new opportunities:

- The MIND work is not done; we still can develop better, automated localization for troubleshooting proactive and reactive network faults.

- One topic that will be important for our MIND goals is standardizing GIS information. This would allow the automation we created to work across tools and platforms for greater ease of use and broader adoption.

- We look forward to a forthcoming release of the new PNM training material from SCTE, which our expert participants intend to peer review further.

- As our work on GAI use for network operations continues, we intend to develop better input and better testing methods of these new tools to ensure their reliable application.

- The face-to-face meeting also revealed that we should make better use of our knowledge of noise, distortion and interference (NDI). By looking more closely and improving the categorization of these various signal impairments, we expect to identify their sources and locations through automation — which will help form additional efficient PNM opportunities.

Beyond Proactive Network Maintenance

While there is a substantial community working on RF and DOCSIS-related PNM, CableLabs doesn’t want to leave fiber out of the fun!

We are currently identifying near- and far-term opportunities in the passive optical networking (PON) world as well. As many operators push fiber deeper — all the way to the premises — the Optical Operations and Maintenance Working Group (OOM WG) works toward identifying use cases and aligning them to the telemetry that network elements provide. By better aligning PON and DOCSIS technologies, the group helps streamline network operations and maintenance further.

While the fun continues with DOCSIS technology and there is much more to develop, we are applying our experience with RF over coax to RF over fiber.

Whether your concerns are with maintaining DOCSIS or PON deployments, or ensuring network or service reliability, there is a community ready to work with you. Join a working group, and join the fun!

Wired

Future-Proofing Optical Networks: Streamlining Operations for a Smooth Transition to PON

Key Points

- Cable operators desire to simplify operations while they embrace passive optical networking (PON), but maintaining and managing PON solutions is proprietary, different from other access networks and requires new tooling.

- Some unification of fault and failure management is desirable for the industry so that operators can reduce the need to swivel chair between networks and tools, and vendors can simplify what they support in their products.

- PON, like other optical networks, relies on proprietary management tools based on, at times, telemetry that is proprietary or extended from any of a number of standards or specifications.

- From our experience with DOCSIS technology, by helping the industry align on operations, CableLabs knows this could all be better for everyone.

Imagine you’re a network engineer trying to manage the capacity of your DOCSIS networks — and suddenly you’re tasked with learning a whole new set of additional tools, techniques and terminologies to manage a new type of access network: a passive optical network (PON).

Now apply that complexity to the entire operations, the many involved technicians and all the necessary tools to accomplish the task. That’s the level of difficulty that many network operators face today as they begin to deploy PON technology for their access networks. Maintaining and managing PON solutions is largely proprietary — and very different from other access networks, requiring new tooling and expertise.

Needless to say, operators are in the midst of a massive transition. Instead of operating a few flavors of DOCSIS® networks, they’re managing an even broader set of technologies in their access networks. But these challenges don’t need to be as significant as they are.

By aligning the industry with certain architectures and identifying the key telemetry, building solutions that support use cases in ways that streamline operations, and reducing the burden of broad and overlapping options for vendors, we can shrink the time to market for improved technologies while streamlining our networks overall.

To help operators rise to the challenge, CableLabs is not only committing resources in this direction but has also established a working group called Optical Operations and Maintenance (OOM) that tackles the aforementioned complexities head-on.

The Optical Operations and Maintenance Working Group

The OOM working group has the scope of aligning fault and failure management of optical networks to streamline operations. The stated objectives of this program and working group are to “reduce troubleshooting and problem resolution time and costs while increasing network capacity and uptime.” These objectives include:

- maintenance that is proactive, reactive, predictive and more

- attention to the physical layer and related functions

- telemetry alignment, solution development and more

If you've been around the industry a bit, these objectives might seem familiar. Indeed, the OOM working group’s objective statement is very similar to that of CableLabs’ Proactive Network Maintenance (PNM) working group. We could have called OOM “Optical PNM,” but we recognize that operators have goals beyond PNM when it comes to optical networks. Our charter for this work matches what operators and vendors say they need.

Although that scope seems large (and it is!), OOM is also focused on quickly turning around valuable results. The current course of the working group is like a peloton of bicycles in a race: In a cooperative effort, OOM will share outcomes with a parallel working group that addresses other fiber to the premises (FTTP) challenges since the working group is indeed addressing one FTTP challenge—common provisioning and management.

By working together, we accelerate the work of both groups. When it comes time for OOM to move to the next optical challenge, we'll have the momentum we need to push forward.

Creating Industry Alignment Through Standards and Specifications

Architecture is the foundation for operations in that network operations must manage the network components and systems. How the network does its job to provide service — and how it fails to do so — drives what needs to be managed and monitored. Fault and failure monitoring use cases drive the information needed, and therefore the network telemetry and other data that network operators need.

The architecture connects through the system failures and service impacts to the telemetry that supports the network operations use cases. Through a traceability approach, OOM identifies the necessary telemetry that addresses operator needs, choosing from existing standards and specifications when possible. The process we follow will create industry alignment on what is important in standards and specifications today. That methodology eases the burden for vendors.

Reducing complexity is a first alignment step, but we also want to drive consistency. That consistency will focus on current cable operations as well as the other networks they manage. By targeting PON options that align best with DOCSIS network advantages that operators enjoy today, we reduce the operations burden. It then becomes possible to agree on common tools, and thereby reduce the cost of network operations. By enabling the alignment of optical network management, we take that one step further.

We’ve already seen the potential of OOM's work in PON, but the next steps will present a whole new challenge. Introducing aligned use cases to connect the architecture to the telemetry can reduce the complexity of vendor developments and operator tools. We have to drive that advantage to other networks, too.

Looking Ahead to New Optical Challenges

Those next optical challenges I mentioned before? We’ll set our sights toward the core next: other forms of optical access, optical trunk systems such as point-to-point (P2P) coherent optics, Metro Optical Ethernet (MOE) and backhaul and ring and mesh systems, regional optical networks, backbone networks, and maybe beyond. One could say there is light at the end of the fiber!

If you see this as an opportunity to streamline your business in the optical world, engage with CableLabs on any of a number of our optical networking efforts and consider being a part of the OOM working group. We invite you to see the light!

DOCSIS

Ready, Set, 4.0: Tooling Up for DOCSIS Technology’s Rollout

DOCSIS® 4.0 deployment is almost here. Are you ready?

Fortunately, CableLabs is getting more prepared every day. A DOCSIS 4.0 Tools and Readiness working group has been hard at work, focused solely on this new technology and aiding operators in deploying it. The working group, part of SCTE’s Network Operations Subcommittee, has been preparing for deployment for several months and will continue that work as the industry learns more about how to build, deploy and operate services on DOCSIS 4.0 networks.

CableLabs, SCTE, and several operators and vendors are collaborating to assure timely delivery of available knowledge to assist operators as they prepare to deploy DOCSIS 4.0 technology. And that technology is almost here.

In July, CableLabs completed an Interop·Labs event to prove DOCSIS 4.0 cable modems can function as intended with DOCSIS 3.1 cable modem termination systems (CMTSs). Anticipating this development, CableLabs and industry colleagues — many of whom participate in SCTE Working Group 5 (WG5) — released a public document that shared our knowledge thus far and our plans for future development of capabilities and tools.

Proactive DOCSIS 4.0 Strategies

WG5’s report, “Cable Operator Preparations for DOCSIS 4.0 Technology Deployment,” assumes that the technology is not readily available yet, so the technology addresses what operators can do now to prepare. It focuses on network operations, covering early preparations and assessment of network segments and customers. The report also dives deeper into details to find the best early implementation locations and discusses how to make use of information available from the existing DOCSIS 3.1 networks.

With this technical report, we’re helping the cable community prepare their networks and operations for this new technology, which will enable the 10G platform of the future. Operators are already getting started.

“DOCSIS 4.0 represents a significant technological step forward,” said Will Berger, vice president of Telemetry Development for Charter Communications, a CableLabs member company. “By preparing now, operators can gain a valuable head start on what will be a collective challenge for the industry. While the technical aspects of this evolution are well-known, the opportunity to gain valuable operational preparation time is critical to ensuring a smooth transition to the 10G future.”

So, what happens next? WG5 is focusing on several important problems that we expect could emerge in early deployments of DOCSIS 4.0 technology. Some of these issues include:

- Interference due to higher transmission levels.

- Noise that occurs in new frequencies and new directions in the access network.

- Frequencies that are blocked due to legacy hardware in the network.

- Signal impairments that might reveal themselves at higher frequencies or at higher power.

- Leakage that might occur at new frequencies and interfere with new higher-frequency bands.

- How to operate new amplifiers and echo cancelers.

- How to manage the profiles in this new and complex network technology.

In addition, as we learn the severity and nature of any problems in these areas, we’ll be thinking about how to use our existing tools as well as what other tools we’ll need to develop to maintain our networks at the high degree of quality needed to support DOCSIS 4.0 services.

Collaborating for Industry Impact

If all this seems daunting, you’re right. In fact, we could use your help. If you’re in the cable industry, consider joining and contributing your knowledge and expertise to the working group. It’s an opportunity to learn at the earliest possible time what problems are becoming most significant, which methods are working or not working, which techniques may be automated into tools and which approaches require new vendor tools.

Working groups are part of the SCTE Standards program, a resource that is available to employees of our member companies as part of the complimentary CableLabs All Access Benefits program. To learn more and join the DOCSIS 4.0 Tools and Readiness working group, click the button below.

As you work to prepare and operate DOCSIS 4.0 technologies in your network, give yourself a head start by learning from others what works and what doesn’t. Better yet, share your ideas to get feedback faster and focus your efforts, while also helping others along the challenging path of technology evolution.

Also, for more about optimizing our networks, join “PNM Live!,” a panel discussion at SCTE® Cable-Tec Expo® on October 18. Experts, including CableLabs’ Jason Rupe, will discuss proactive network maintenance and how we can solve problems before customers are impacted.

HFC Network

Managing Network Quality and Capacity With Proactive Network Maintenance

You probably know that Proactive Network Maintenance (PNM) is about finding and fixing problems before they impact the customer to ensure highly reliable and available cable broadband services. But the other side of PNM is about managing the capacity or bandwidth available in the network. PNM may have started with the former concept in mind, but the latter is becoming more important as we rely on higher amounts of capacity at the edge. As the world adjusts to life during the COVID-19 pandemic, access network capacity is becoming even more critical. PNM is an important toolset for network capacity management, and CableLabs is helping operators manage network quality and capacity together.

Network condition impacts network capacity. Network impairments, a broad class of failures and flaws in the ability of a network to carry data, have to be addressed before they lead to service failure. The DOCSIS® protocol is a method for sending data over multiple radio frequencies in hybrid fiber-coax networks, and comes with several resiliency mechanisms, like profile management, that help service continue in spite of impairments, to a point. These impairments in the cable plant may impact a few or all frequencies. Impairments that impact specific frequencies may or may not be able to be compensated for, on those frequencies. If severe, the impairment may impact the data carried on those frequencies entirely, leading to correctable or even uncorrectable data errors. If not severe, profiles may be able to adjust to lower modulation orders to allow less data to be reliably carried than otherwise. Impairments that impact a larger amount of frequencies of course have a greater impact on the bandwidth the network can carry. In any case, impairments impact the capacity that the operator can get from the access network.

For example, consider that operators often place upstream bandwidth into lower frequencies, near where radio and electrical interference can enter the network through damaged cable or loose connectors. Upstream profiles can help make these frequencies useful when otherwise impaired; PNM can help operators find, work around, and fix ingress issues before they impact service. If the cable is damaged in multiple places (or say water gets into the cable due to wind causing it to move and get lose or damaged) then multiple frequencies can be impacted. But DOCSIS mechanisms help services be robust to these problems, and PNM can alert the operator to the problem, allowing a proactive fix.

PNM is a practical set of tools for network operators to manage network conditions, which becomes even more important as we move toward higher utilization of the access network capacity. As demand for bandwidth increases at the edge, PNM becomes an important network capacity management tool for network providers. The difference between a perfect network and one with flaws felt by customers begins to shrink. PNM begins to be an imperative; it is “table stakes” for maintaining communications services and managing the capacity of the network.

For almost all of us, we share our connection to the internet and our communication services whether fiber or coaxial cable is the final connection to the home. Over the years, DOCSIS has grown to provide much higher data rates over a shared medium, in addition to adding resiliency. Cable Modem Termination Systems (CMTSs) enable the network resources to be shared efficiently, so that we all have access to better communications through economies of scale, allowing us all to take advantage of the capacity available. Service providers can manage the network capacity with a number of methods to make sure service needs are met, PNM being one of those mechanisms.

CableLabs has been working with these issues in mind for some time. In July of 2019, I wrote on the subject of 10G and reliability, pointing out that higher bandwidth solutions closer to the customer will be required for 10G. Then, in August, I wrote on the subject of reliability from a cable perspective and pointed out that the impairments addressed through PNM impact capacity. So, we see that reliability and network capacity are closely coupled. As we move toward higher bandwidth services, expand the utilization of frequencies and further push the limits of technology, reliable and sufficient bandwidth become highly coupled. Therefore, so do the tools that network providers use to manage these service qualities. CableLabs is working on solutions to help operators succeed in this reality.

Reliability

Network Capacity Management Using Proactive Network Maintenance

You probably know that Proactive Network Maintenance (PNM) is about finding and fixing problems before they impact the customer. But the other side of PNM is about managing the capacity or bandwidth available in the network. PNM may have started with the former concept in mind, but the latter is becoming more important as we rely on higher amounts of capacity at the edge. As the world adjusts to life under the COVID-19 pandemic, access network capacity is becoming even more critical. PNM is an important tool set for network capacity management, and CableLabs is helping operators manage network quality and capacity together.

Network condition impacts network capacity. Network impairments, a broad class of failures and flaws in the ability of a network to carry data, have to be addressed before they lead to service failure. The DOCSIS® protocol is a method for sending data over multiple radio frequencies in hybrid fiber coax networks, and comes with several resiliency mechanisms that help service continue in spite of impairments, to a point[1]. These impairments in the cable plant may impact a few or all frequencies. Impairments that impact specific frequencies may or may not be able to be compensated for, on those frequencies. If severe, the impairment may impact the data carried on those frequencies entirely, leading to correctable or even uncorrectable data errors. If not severe, profiles may be able to adjust to lower modulation orders to allow less data to be reliably carried than otherwise. Impairments that impact a larger amount of frequencies of course have a greater impact on the bandwidth the network can carry. In any case, impairments impact the capacity that the operator can get from the access network.

This is why PNM, which is an important set of tools for network operators to manage network condition, becomes even more important as we depend more on our network capacity and move toward higher utilization of the access network capacity. As demand for bandwidth increases at the edge, PNM becomes an important network capacity management tool for network providers. The difference between a perfect network and one with flaws felt by customers begins to shrink. PNM begins to be an imperative; it is “table stakes” for maintaining communications services and managing the capacity of the network.

CableLabs has been working with these issues in mind for some time. In July of 2019, I wrote on the subject of 10G and reliability, pointing out that higher bandwidth solutions closer to the customer will be required for 10G. Then, in August, I wrote on the subject of reliability from a cable perspective and pointed out that the impairments addressed through PNM impact capacity. So, we see that reliability and network capacity are closely coupled. As we move toward higher bandwidth services, expand the utilization of frequencies and further push the limits of technology, reliable and sufficient bandwidth become highly coupled. Therefore, so do the tools that network providers use to manage these service qualities. CableLabs is working on solutions to help operators succeed in this reality.

Recently, CableLabs announced the release of a new capability in our Proactive Operations (ProOps) platform that uses RxMER per subcarrier and profile information to inform the selection of PNM opportunities. Also, our PNM working group announced the release of our DOCSIS 3.1 PNM primer of engineering practices, which we intend to develop toward best practices for the industry. If you are an operator or vendor interested in this subject, contact us for more information and to help us develop this solution for the industry.

______________________________________________________________________________________

[1] Because of the resiliency of DOCSIS® technology, impairments in the network have an impact on the capacity available in the network for serving customers, even when service remains functional, and even when customers may not notice right away or always. Without resiliency, an impairment leads to failure. Network resiliency is what keeps service running over impairments, which lets operators fix problems before they become severe and provide highly reliable services.

Reliability

Cable-centric Reliability

No doubt our cable industry has a unique culture of working and innovating together to solve technical issues. But there are best practices from other communities which we can build from; these practices inform how we can continue to develop toward more reliable services. By “reliable,” as it relates to service, I mean reliable, available, and resilient services, which result from reliable, available, resilient, repairable, maintainable, and highly performing cable networks, not to mention operations focused on the customers’ needs. On the other hand, specifically used, reliability refers to the probability of not experiencing failure, whereas availability refers to the expected proportion of time that something is working as intended. These are very related, but very different things. You can read more here. But when we speak generally about reliability, often many of these like concepts are relevant.

What is Unique About Cable Relating to Reliability Concepts?

For one thing, DOCSIS® networking is unique. Each version of DOCSIS technologies improved performance, but also increased the robustness of the services it supports. Error correction, profile management, pre-equalization, echo cancelers, and other technologies have enabled this performance extension, but also these advantages create separation from the impairment and service failures, allowing for maintenance before service is impacted.

Another unique advantage is Proactive Network Maintenance (PNM). The advantages of DOCSIS technologies are what make PNM possible. We use data to find impairments in the network that, left untreated, will eventually impact service. This capability affords operators the opportunity to find and remove impairments early, before the network is further damaged by degradation, and service is impacted severely. Networks can be maintained well, but also services remain available while the network is experiencing failure.

Cable operators and vendors in cable have analog radio frequency (RF) expertise with a digital mindset. The cable industry knows RF, and that knowledge has helped it get the most out of the physical layer of the network. That deep understanding of the network’s physical layer is why mitigating network failure modes is second nature, and the industry has the needed skills.

Then there’s the industry’s “laser focus.” Pushing fiber out deeper into the network can improve reliability and availability, but current technology does lack some of the PNM advantages. There is work to do, but the capabilities are there for us to develop.

What Are the Best Practices We Can Re-use?

Designing communication networks for reliability carries many best practices and experience.

- The ability to understand and mitigate failures before deployment – We have defined PNM use cases based on the measurements we’ve been able to define in the DOCSIS specifications. Now, we must extend that work to link to failure modes, effects, and criticality analysis, and root cause analysis, to inform technology choices, measurements for management, and design for reliability.

- Condition based maintenance – Maintenance optimization research is clear that in any practical situation it is almost always more cost efficient to base maintenance on condition information rather than age information.

- Prognostics and Health Management (PHM) – A newer field of reliability, PHM is a lot like our PNM. PHM is a research field of study using data sources (e.g., vibration in mechanical systems, or charge time in batteries) to determine the remaining useful life of a component or system. PNM is a clear cousin to that field, so we can certainly share and gain benefit from that work.

- Certification testing – Certifying cable modems (CMs) has improved the PNM responsiveness of CMs, and the same can be true about cable modem termination systems (CMTSs) as that part of the network begins to align.

- Maintenance optimization – Service reliability and availability, in addition to network reliability and availability and robustness, are important focuses for the industry; they relate, but are distinct and important in their own. The network can fail while service continues to perform at a high level, so maintenance can be better planned in this situation.

Thoughts for the Future of Cable

- More options mean more standardization – Adding more options to the technology choices allows operators to better meet the unique needs of their customer base. However, keeping it all standardized increases operability and repairability so that service is highly reliable and available.

- Each feature needs measurements – As we add options and features to cable technologies, each option needs special measurements to assure that the feature can be managed properly. DOCSIS 4.0 technology is full of options, so we’ll need a critical eye on each to make sure those options can be operated reliably.

- Pushing the limits of technology requires more diligence on PNM – As we rely on tighter tolerances and more complexity on issues like upstream noise, echo cancelation, and error correction, we need more information about how those perform, and more diligent PNM practice relating to them.

- Impairments relate to capacity and network resilience – As capacity becomes a stronger focus, the impact of impairments on that capacity becomes more important, so cable network reliability is entwined.

- As we push higher capacity to the edge, redundancy must come with it – With more capacity comes more critical services, and more impact to the lives of customers. A failure becomes more impactful as a result. Then, as the cost of a failure increases, large failures become more expensive, driving the need for more network resiliency, and thus more redundancy.

Strong Foundation, Strong Future

Building on a strong foundation of PNM and DOCSIS technologies, the cable industry has the right culture and technology foundation to take communications to a reliable future. We have lots of work to do, but we’re on the right path to do it. Here we go!

10G

10G, Reliably

The 10g platform is going to provide reliable service. As the cable industry embarks on the development of 10G services, there is a lot of work ahead, but we already have a strong foundation of experience and technology to build upon.

The 10 Gbps goal is about performance. But it must come with low cost, high quality, and sufficient reliability. 10G services have to be easy to install reliably, remain stable and robust against cable plant variations and conditions, and provide a wealth of service flexibility so that services remain reliable under a broad set of use cases.

The Road to 10G…

At CableLabs, we’ve taken big leaps toward 10G with DOCSIS® 4.0, including Full Duplex DOCSIS, and with cable modems (CMs) which will be capable of 5 Gbps symmetrical service in the near future. To fully arrive at 10G, we need to enable 10 Gbps downstream speeds. To accomplish that, we’ll need to expand our use of available spectrum, and we’ll likely need to use that spectrum in a highly efficient manner. Pushing higher bandwidth solutions deeper into the network and closer to the edge customers will be required, too. We have a lot of innovation ahead of us to get to the 10G future.

…Is Paved with Innovation

Invention often begins with an initial solution that is later repeated for verification, then validated further. That initial solution then needs to be scaled; in other words, it needs to be made repeatable, at a low cost, and with sufficient reliability.

Fortunately, DOCSIS networking is a technology with many reliability traits integrated. Data are delivered reliably due to Forward Error Correction. Profile management can control the data rate to allow the best performance possible, but not push performance to low reliability. Adjustments to the connection between the cable modem termination system (CMTS) and CM assure reliable transmission continues under constant environmental and network changes. And Proactive Network Maintenance (PNM) assures that plant conditions are discoverable, and that they can be translated into maintenance activities that can further assure services stay reliable at low cost. The cable industry is starting on a solid foundation.

Consider one possible direction we could take on the road to 10G. As we begin to expand the frequencies that DOCSIS uses, we may need improved error correction, better profile management, or better CMTS-to-CM coordination to assure reliable services continue at expected levels. However, pushing these limits might also mean new failure modes in the plant, or greater service sensitivity to existing failure modes, thus increasing the importance of PNM. Operators should up their PNM game now, understanding that it will be an even more important element to assure a reliable 10G future.

A Super Highway in Many Directions

Because of this strong reliability foundation in cable technologies, particularly DOCSIS, we can build our 10G future with reliability in mind. Rather than simply extending our boundaries and hoping that our existing methods to assure reliable services will be sufficient, we can define solutions that bring reliability with them. By focusing simultaneously on increased performance, lower operational costs, and reliable services, we can evolve into an effective, desirable 10G future for the world.

Also, by thoughtfully choosing the technologies to develop, we can create degrees of freedom and opportunities to enhance reliability while developing 10G. This is the right approach for the industry to take because reliability can only be built into a service, not added later. By choosing to develop solutions now that expand our options for reliable services, we can enable operators to have full control of their services. To make it work reliably, PNM will be there, and so will a few other advantages to come.

Wired

Cable Network Reliability: ProOps Platform for PNM and More!

Cable network reliability has many important dimensions, but operators are all too familiar with the significant cost of maintenance and repair, and some with the advantages of Proactive Network Maintenance (PNM). But not everyone has taken full advantage of PNM. Let’s have a look at some of the reasons for that, and what CableLabs is doing to address those needs as part of its PNM project.

The Proactive Operations Problem

CableLabs has been informally assessing the reasons why more operators don’t take advantage of the proactive gift that DOCSIS® provides: the ability to use PNM data to find problems in the network before they become impactful and costly.

It takes a lot of work to implement solid PNM solutions that keep working. A key task in operations is to make decisions based on data. That takes expertise and time. Not every operator or vendor has an expert army in place to analyze all the available operations data to find proactive maintenance work worth doing. Machine learning is anticipated to help, but it will require a lot of work to apply those techniques successfully to an operations task like PNM, and even more to develop the needed controls. Likewise, not every operator or vendor has a statistical analysis or IT army in place to build enterprise tools to automate the process of turning data into action.

Some operators need to start small with testing PNM concepts to find a solution that fits their needs. That means many operators must experiment and learn first. But that requires basic, general tools in hand before experimentation can begin.

A ProOps Platform for Everyone

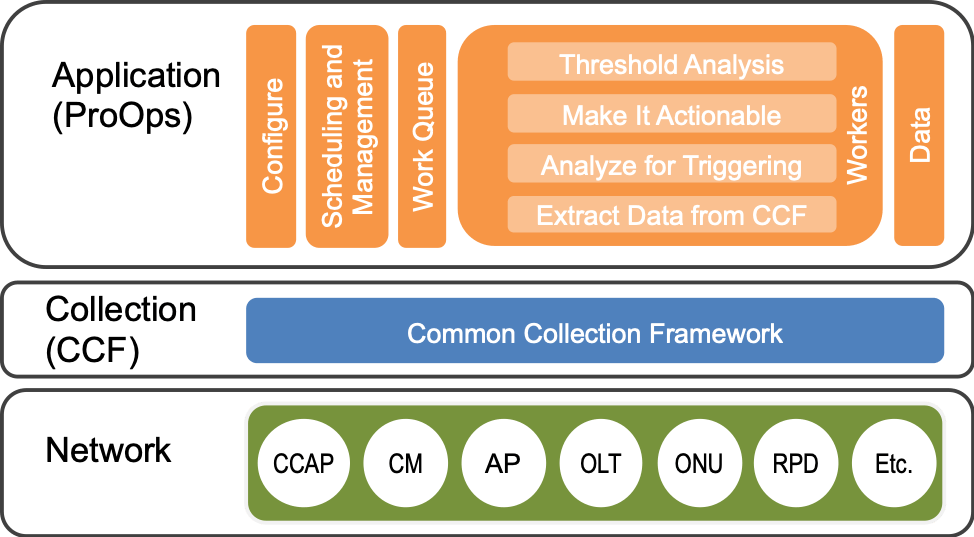

Figure 1. ProOps with its elements and workers in four layers, built on CCF, on top of the network.

CableLabs created a generalized process for translating data into operations actions and applied it to PNM. Then we built the Proactive Operations (ProOps) platform to enable this process, thus making it easy for everyone to try, develop, deploy and make full use of PNM.

ProOps translates network data into action through a framework that is not strictly enforced but is enabled and supported to better ensure effective proactive maintenance.

The steps we identify for turning network data into action are briefly as follows, moving up from the network, through data collection, and through the worker layers of ProOps in Figure 1.

- Extract Data from the Common Collection Framework (CCF)—ProOps uses CCF to extract the data it needs from the network, then applies basic analysis to translate the data into useful information.

- Analyze for Triggering—Next, the results are analyzed further to determine whether they are interesting or not; interesting results are “triggered” for deeper scrutiny. The data are looked at over time and across data sources to orient the information into context.

- Make It Actionable—Once we find the most interesting network elements to watch, we group network elements into network tasks and provide a measure of importance for the identified work.

Threshold Analysis—The best work opportunities get picked to become proactive work packages, which can be selected based on impact to customers, likelihood of becoming an emergency, and so on.

You ShOODA Get ProOps!

The steps we outline for turning network data into action—or in this case pro-action—align nicely with the well-known strategy of observe, orient, decide, act (OODA). This OODA loop, or OODA process, was created by U.S. Air Force Colonel John Boyd for combat operations. The operations of combating network failure aren’t much different! If you work as a cable operator, then you know.

ProOps is available upon request to any operator member or vendor of the CableLabs community. CableLabs supports users by helping them to deploy ProOps with an example application that shows how to configure it to a specific operator or use case, and we will help our members develop solutions in it, too. Just contact Jason Rupe to get your copy.

Our goal is to help operators provide highly reliable service, and efficient, effective operations is one proven way to do that. ProOps is the latest tool to combat network failures.

Wired

Proactive Network Maintenance (PNM): Cable Modem Validation Application(s)

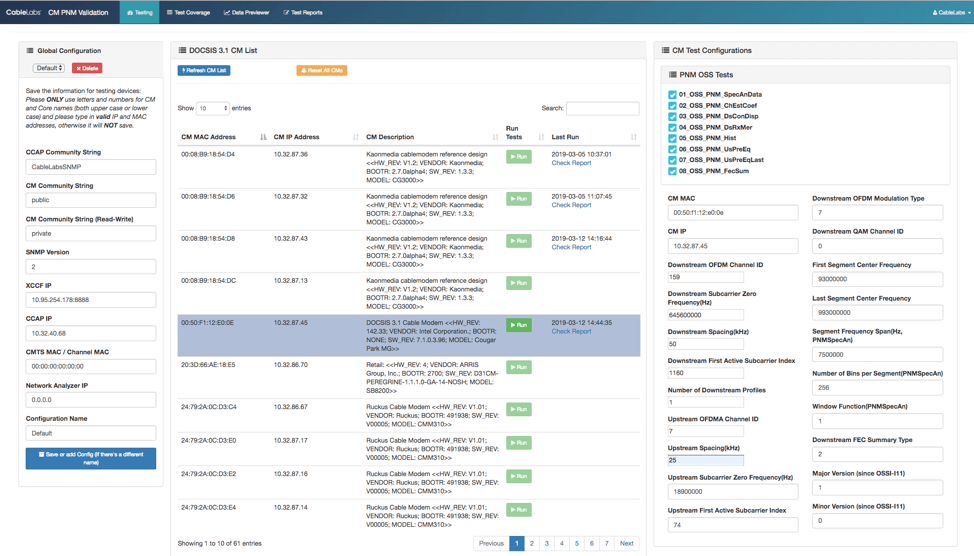

Sometimes, two apps are better than one. We now have two versions of the Cable Modem Validation Application (CMVA) available for download and use: a new lab automation version, and a data exploration version.

Thing One and Thing Two

Lab automation and certification have unique requirements, but investigation and invention require flexibility. Because the CMVA found value as a cable modem (CM) data plotter and browser on top of its original purpose as a lab testing tool, we decided there should be two versions—one focused on each use case.

Sometimes You Feel Like a DUT

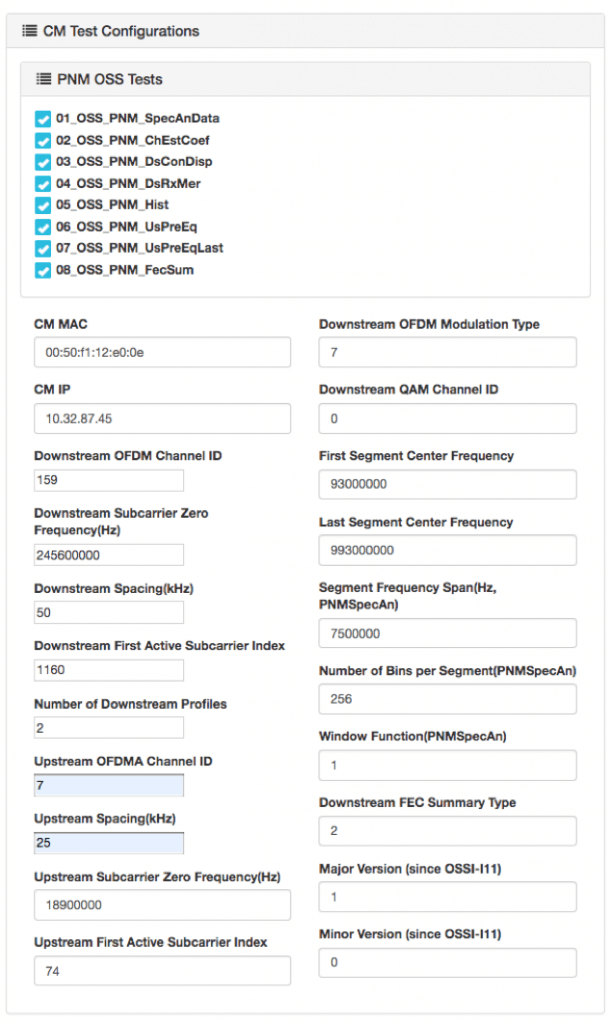

The newest, most complex version of CMVA is built specifically for CM Cert-Lab testing and includes several new features and automations:

- Improved efficiency for CMVA on certification testing: CMVA now discovers OFDM/OFDMA-based topology information from the CMTS and loads all related channel configuration information automatically for testing. CMVA also synchronizes PNM SNMP SET command parameters with XCCF for better efficiency and greater control.

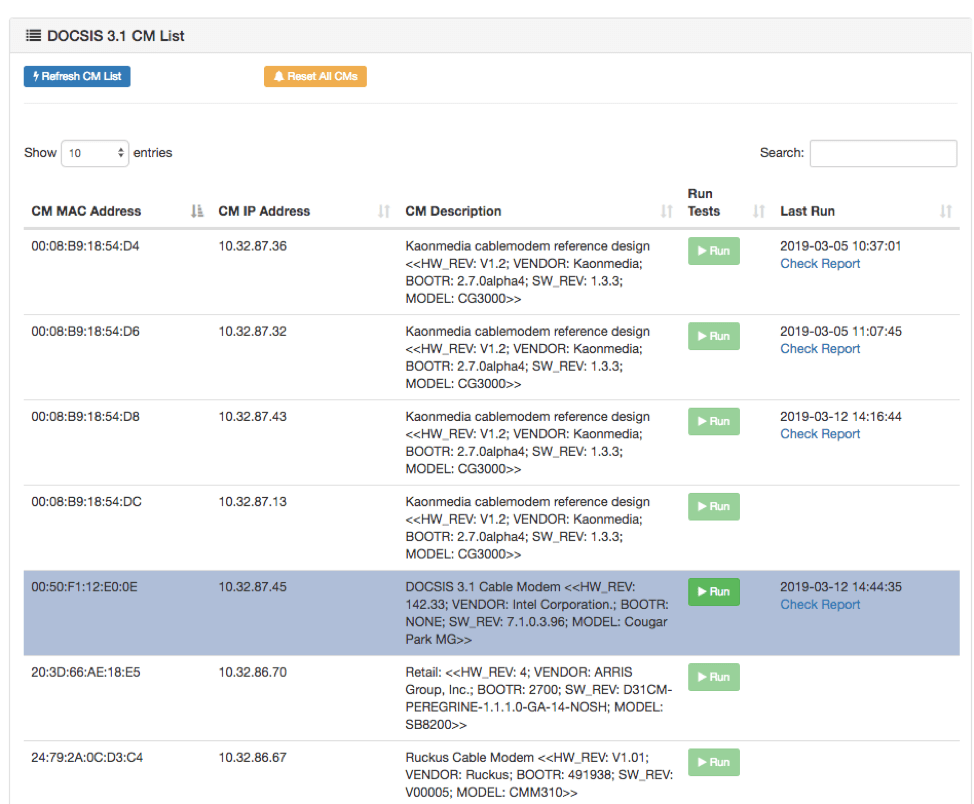

- Automated discovery of the active DOCSIS® 3.1 CM list: Users can easily select CMs with their test configurations automatically filled to start tests with a few clicks.

- CMVA now runs multiple PNM tests sequentially on multiple CMs in parallel with simple clicks on a single user login: The latest test reports are directly served from the CM table. Different users are handled in parallel, as previously.

- CMVA now embeds detailed testing logs into the HTML test report: The log file can be downloaded from the HTML test report. The HTML test report is portable.

- CMVA now keeps copies of raw PNM test files together with the test reports for vendor debugging references: When downloading the test reports, CMVA packages the test logs in raw text, and forms the portable HTML test report into a single archive.

- All the Acceptance Test Plan (ATP) calculation activities are placed in the log file for vendor debugging references.

- We added a function for resetting CMs remotely with one click: This is important for testing and useful for other purposes.

Figure 1: New layout for test and configuration management

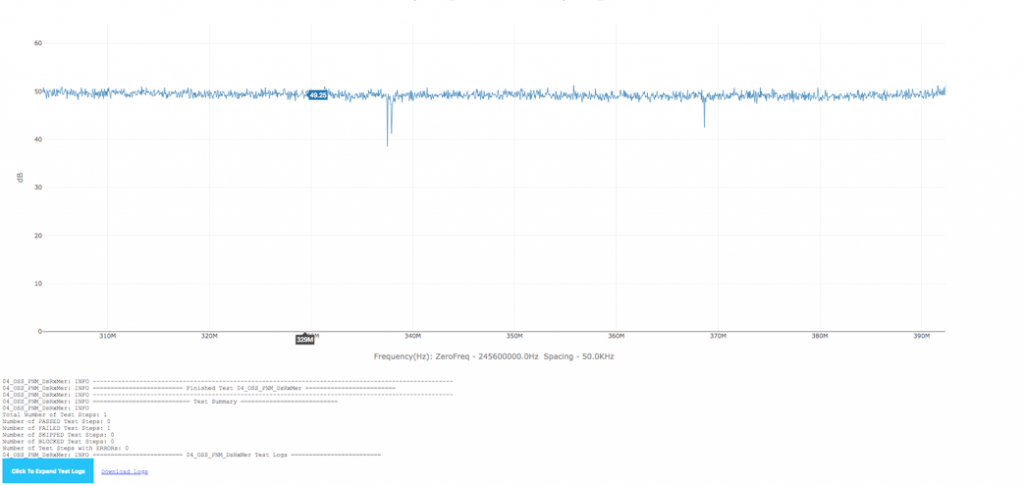

Figure 2: Select CM directly from the table to start tests; the latest reports are linked directly in the table for convenience

Figure 3: The test procedures ran last time are tracked, and the configurations are automatically filled

Figure 4: Detailed test logs are embedded directly into the portable HTML test report and can be downloaded as pure text log

All these new features are important for test automation, but some of them are useful for other needs. Go nuts! But if you simply want the basic capabilities that CMVA always provided, you can still get that version.

Sometimes You Don’t

Sometimes you just want a simple way to poll a set of modems and see what you can get. The previous version is a bit simpler, but it still has the validation capabilities if you need them. So, it might be the version that can address most, if not all, of your needs. We use it for many purposes but mainly as a testing and development tool. Here are some specific use cases we’ve encountered:

- Testing ideas in the lab: The PNM Working Group InGeNeOS conducted lab testing, as reported on before, and we used CMVA to grab data from CMs under test.

- Developing applications: As we work to develop our first large-scale PNM base application, inside our prototype PNM Application Environment, we use CMVA to develop theories about how the data can be processed for automated processing.

- Building reports and documenting: So often, we need to capture what certain impairments look like, or obtain a good visualization of a PNM measurement, and CMVA makes that handy.

- Investigating issues: With CMVA, it’s a simple matter to collect data from a pool of CMs and compare the results. This helps us investigate many issues, including changes in firmware versions, CM responsiveness, and other potential issues with plant configuration, software changes and so on.

- Combined Common Collection Framework (XCCF) development and testing: As we develop new capabilities with our XCCF, we can use CMVA to validate its functionality.

If you are a user of CMVA, let us know how you have used it!

Two Can Play at That Game

Although the more complicated testing tool can be used for all these use cases and many more, some users don’t need the automation, overhead and many controls required for automated testing. When you contact us to get an updated version of CMVA, please let us know what you would like to use it for. That way, we can offer you the right version.

Wired

Proactive Network Maintenance (PNM): Are You InGeNeOS?

I love a good acronym! InGeNeOS™ is an acronym built from Intelligent General Next Operations Systems. It’s the name of a CableLabs working group that solves Proactive Network Maintenance (PNM) issues for the cable industry, and it might be for you.

What’s So InGeNeOS about PNM?

The InGeNeOS group focuses on discussing, inventing, building and sharing network operations tools and techniques from the data made available from DOCSIS® systems, including the CM, CMTS and test devices. Other CableLabs working groups focus on DOCSIS specifications, and the SCTE Network Operations Subcommittee Working Group 7 focuses on network operations training material. The InGeNeOS group connects these two worlds and turns the network information into capabilities that engineers and technicians can use to maintain services. We turn DOCSIS system information into solutions that identify, diagnose and sometimes automatically correct network problems—often before the customer notices. When these tools get good enough, they can become proactive. Thus, we often refer to this group as the PNM Working Group (WG). See why we put it into an acronym?

Don’t Just Think—Do!

This group doesn’t merely ponder PNM solutions; it is very active in several ways:

- Developing best practices for PNM solutions—We just started an effort to document PNM best practices in a DOCSIS 3.1 environment.

- Guiding specifications development for emerging technologies—For example, although Full Duplex (FDX) DOCSIS technology is not yet deployed, we know it must be fully ready when it is, and that includes being operationally supportable.

- Sharing experiences, both problems and solutions—Many working group participants work maintenance problems at operator companies, or for operators, so they bring problems to the working group to get ideas for causes and resolutions.

- Testing theories in the lab—Once we develop theories about the causes of problems in the field, we reproduce the theorized conditions in the lab to confirm the cause. We can also calibrate measurements, test methods for detection and develop new PNM tools and methods based on these tests.

This developing, defining, knowledge sharing and testing help operators reduce costs and improve service reliability by improving their network maintenance operations. All these are just examples of what we do. If you have ideas that might fit within this framework, keep reading.

So You Think You’re InGeNeOS?

Operators in—and vendors supporting—the cable industry can easily benefit from joining the InGeNeOS group:

- If you are a cable operator and a CableLabs member, consider this your invitation to join.

- If you are a cable operator but not a member, this is a very good reason to become a member.

- If you are a vendor, all you need to do is sign the NDA and IPR.

In any case, contact Jason Rupe to join the InGeNeOS group.